Of late I’ve been spending some time looking at the Intranet Bounce Rate on an enterprise social media project I’m working on for a large multinational. And by Bounce Rate, (rather than Intranet Bounce Rate), I’ll take the definition found on Wikipedia today:

It essentially represents the percentage of initial visitors to a site who “bounce” away to a different site, rather than continue on to other pages within the same site.

The formula used to calculate bounce rate is: Bounce Rate = Total Number of Visits Viewing One Page ÷ Total Number of Visits

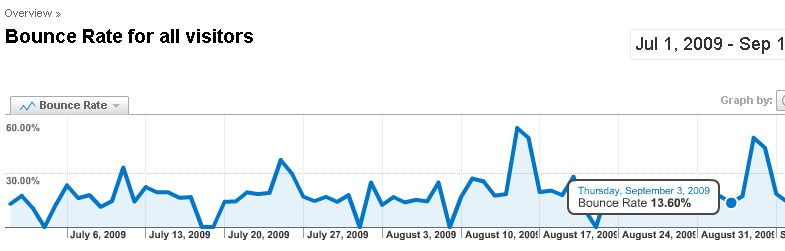

The metrics produced by Google Analytics look quite good to me, at least bythe usual industry standards:

As the Wikipedia article cites, this is very good indeed:

Google.com analytics specialist Avinash Kaushik has stated:

“It is really hard to get a bounce rate under 20%, anything over 35% is cause for concern, 50% (above) is worrying.”

But is this good for an intranet bounce rate, or enterprise social network site? A high bounce rate on a large corporate intranet might mean that users are happiest when they bounce away quickly as they’ve found what they want. Here high Bounce Rate = Good? On an enterprise social network site, well what does intranet bounce rate really mean?

Both Bing and Google offer nothing on this that I could see. Indeed when I search for ‘Intranet Bounce Rate’ on Google, it kindly asks – ‘Did you mean Internet? ‘!!

p.s. One interesting point – Saturdays generate the high spikes. Why?

p.p.s. Some excellent resources from my old colleague at Derby Uni, Dr Dave Chaffey to mull on. Bounce rates in Web design articles

author of Tipping Point:

author of Tipping Point:  demand the hard facts – in God we trust and all that. For long the Environmentalist lobby has proclaimed the supremacy of hard data against those that doubt their prognosis (too much to close down the chatter some say). Are we though seeing others begin to demand the same scrutiny? The Register is on a mission and then we have Ben Goldacre’s

demand the hard facts – in God we trust and all that. For long the Environmentalist lobby has proclaimed the supremacy of hard data against those that doubt their prognosis (too much to close down the chatter some say). Are we though seeing others begin to demand the same scrutiny? The Register is on a mission and then we have Ben Goldacre’s